T

he opportunity to develop e-learning courses for the Robert H. Smith Center for the Constitution was not something to be sniffed at. With experiences in curriculum design and synchronous, as well as traditional and experiential teaching under my belt, the opportunity to develop online courses was extremely appealing.

With the sweets, however, come the sorrows.

That’s not a bad thing. The “sorrows” gives us the opportunity to explore just why things didn’t quite work how we wanted. So why didn’t the courses produced by the Center for the Constitution map onto the expectations of the stakeholders? That’s a complex question and one filled with opinion, so it’s grand that this is a personal website where I can get busy with the opinion.

COURSE DESIGN

The original courses designed by the Center were predicated upon one central feature: that they should take the learner a certain amount of time to complete so as to mirror the “contacts hours” required for continuing education credits (CEUs). The algorithm was simple: for every hour spent on a course, you received 0.1 contact hours. Thus the Center built courses of 60,000+ word scripts, and videos that would take 1-2 hours to (optionally) watch. Okay.

What they also did was to create a series of “guestimate” multi-choice assessments in their LMS with a grade threshold of 0%. While hands were tied because of the nature of the Canvas LMS at the time, what this achieved was to create a box where learners would come into, click through all of the scholar-produced text, ignore the expensive videos, and, if they needed the CEU, go through the course in 15-25% of the allotted time.

Oh, yes. The courses were also meant to be “evergreen.” That is, you would produce one course and it would never require any future updates.

So, how did this happen?

When it comes down to it, it happened because of the lack of course design. Courses were predicated upon scholar-produced scripts of 35,000-80,000 words or more that were seen after months of writing. There was no discussion between the instructional designer (that would be me) and the scholars, and once the script was passed through a phase of peer review, an average of six weeks was required to deploy the course. The result? So many walls of text, and most of them blocks to actual learning, which was frustrating given that the content was actually really good.

THE FIX

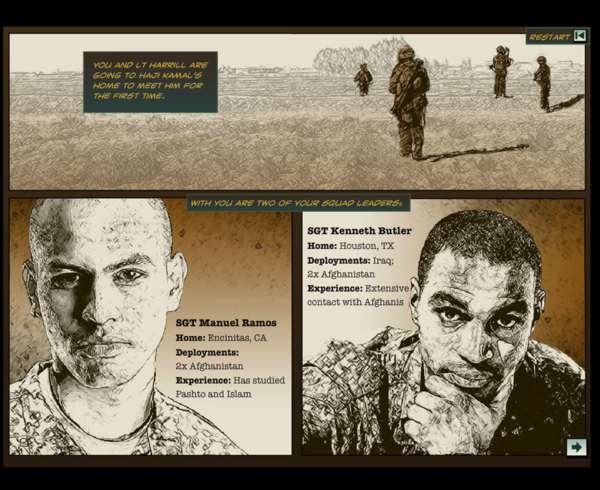

The fix involved one thing: changing the design paradigm—putting the design process front and center rather than relegated to an after-thought once the scholar had produced their script.

This is where the successive approximation model (SAM) came into force. And it was awesome. Rather than just contracting with the subject matter expert, the SAM-based engaged them to design the course concept, shell, and valued assessments. Rather than distant, the scholar gained ownership over the project and was invesed in it. The courses explored better design concepts such as scenario-based learning, gamification, and micro-learning. And the scholar? They showed off their courses to their peers as e-learning done right.